About Us

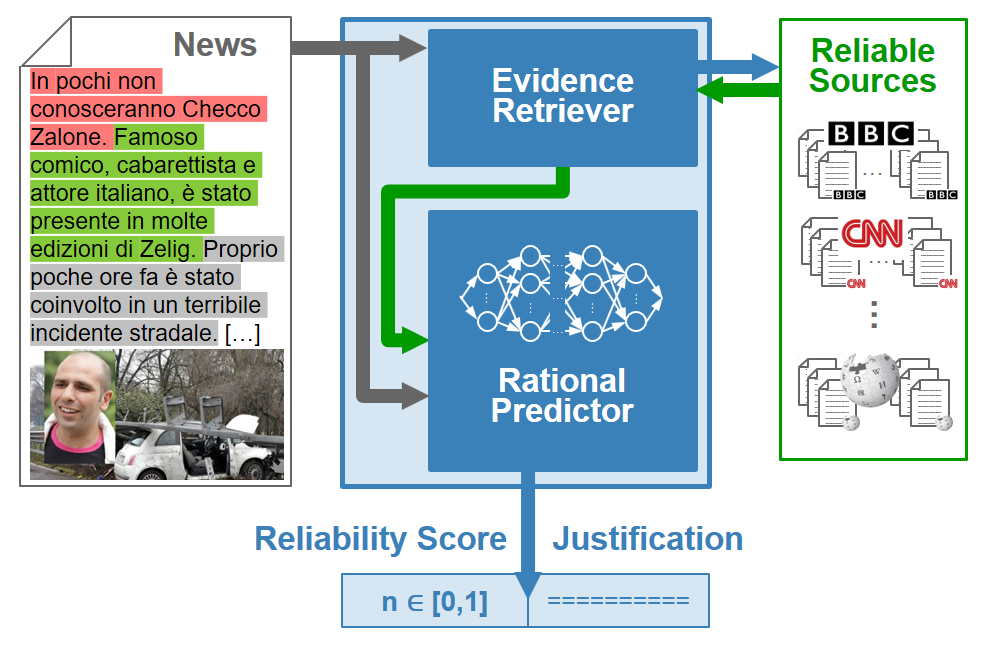

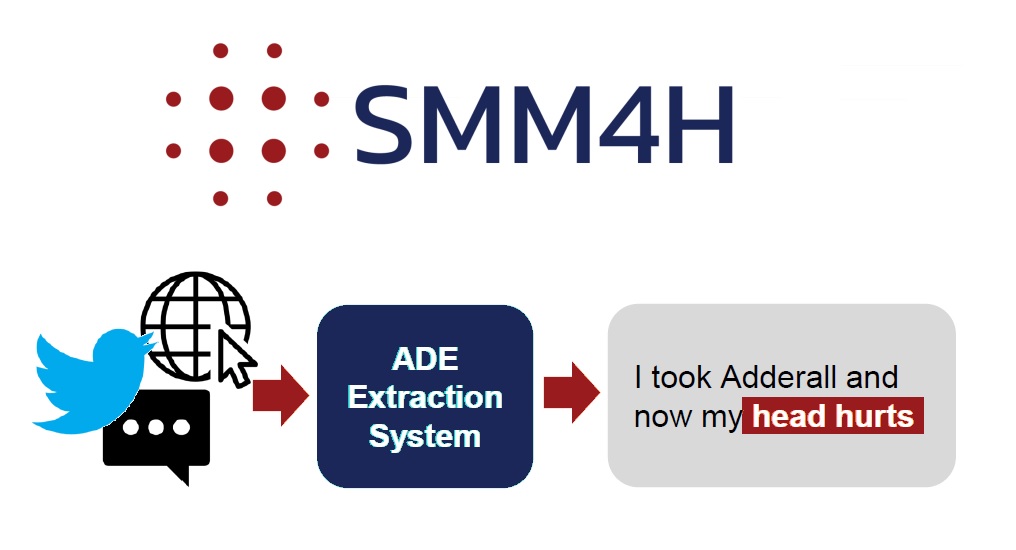

The Artificial Intelligence Laboratory of Udine (AILAB Udine), led by Professor Giuseppe Serra, conducts research in Deep Learning, Neural Networks, Artificial Intelligence, Machine Learning, Multimedia, Natural Language Processing, Computer Vision, and Social monitoring. The laboratory, was founded in 1984 by Professor Carlo Tasso, has a long history of innovation and active support to local activities.

Prospective PHD Students

If you are a prospective student interested in Artificial Intelligence, Machine Learning and Deep Learning Research at the University of Udine, please read about our Ph.D. admissions process and contact us (Giuseppe Serra – giuseppe.serra@uniud.it). If you are applying to our Ph.D. course in Computer Science and are interested in our research, please state this in your statement of purpose.