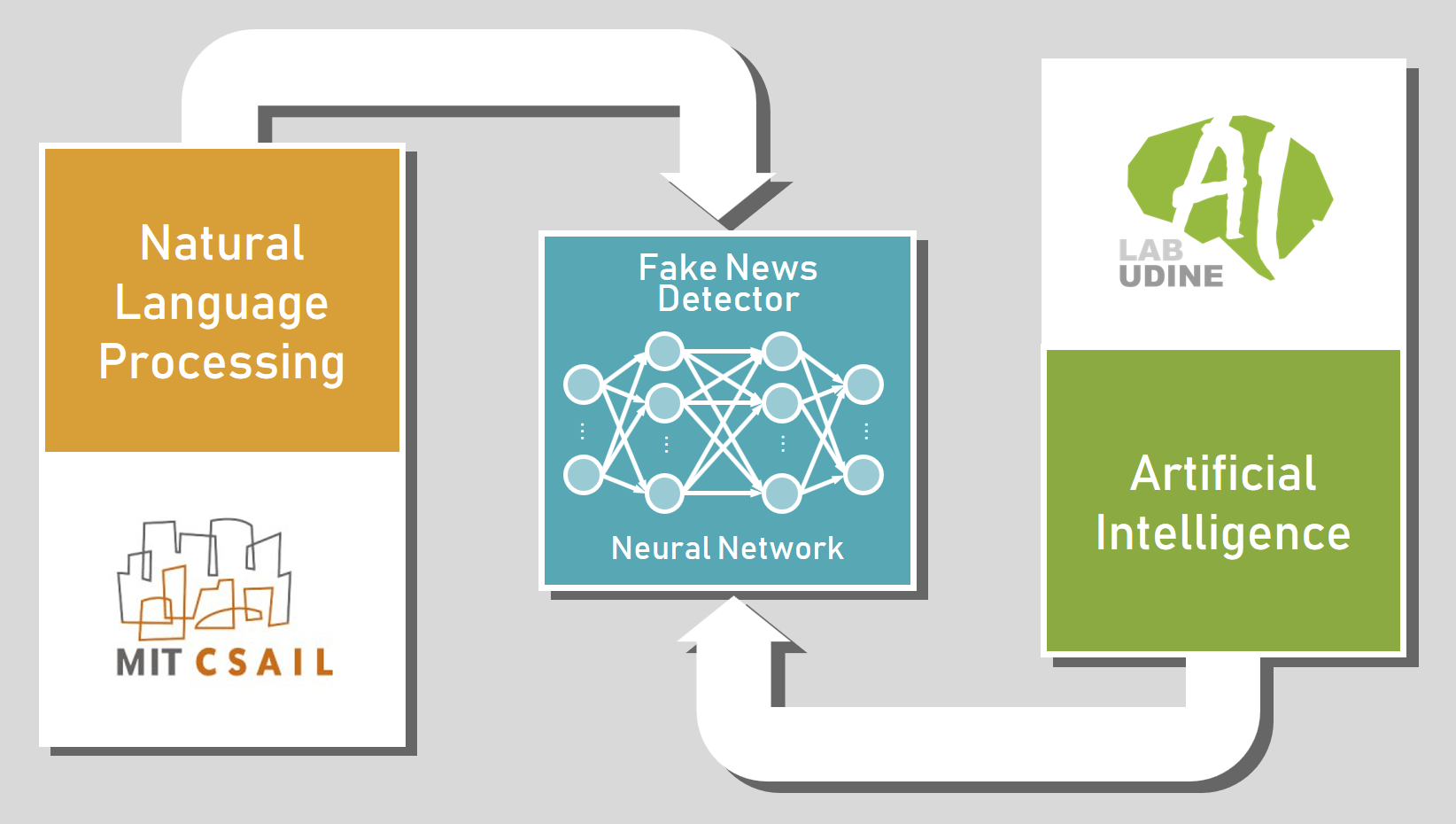

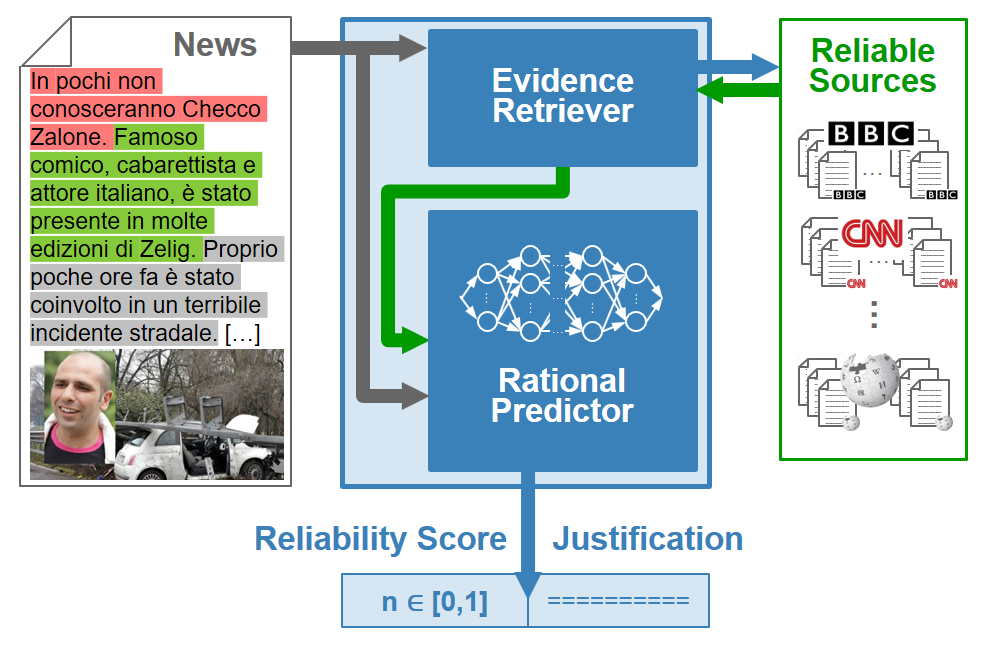

In the last few years, we have assisted at the explosion of news sharing and commenting in social networks. While this practice has positive aspects, as it stimulates the debate, it has been polluted by the diffusion of unreliable news, generally referred to as Fake News. Since these contents are often produced with malicious intents and they have a tremendous real-world political and social impact, the Natural Language Processing (NLP) community has been called to propose algorithms for their identification. Most of the currently existing works are so far based on stylistic and linguistic peculiarity of the Fake News texts (such as excessive use emphasis and hyperbolic expressions). As time passes, however, the Fake News tend to be stylistically and linguistically more similar to Real News, so that Fact Checking remains as the only reliable approach to isolate them. In this project, we employ Artificial Intelligence to assess the reliability of news on the base of not only intrinsic criteria (i.e. style and language) but also extrinsic ones (i.e. context, factual evidence and source credibility). In particular, AILAB-Udine and MIT-CSAIL are working together on a model based on Neural Networks which will analyze the news and extract information from the web to estimate a reliability score, providing with every assessment a set of justifications, so that human beings can critically read the news and consequently take informed decisions.

The project is supported by MISTI’s Global Seed Funds program.

Publications

- Portelli Beatrice, Zhao Jason, Schuster Tal, Serra Giuseppe, Santus Enrico. Distilling the Evidence to Augment Fact Verification Models. Proceedings of the Third Workshop on Fact Extraction and VERification (FEVER), pp. 47-51, 2020. [pdf] [DOI]

Research Group

- Serra Giuseppe (AILAB-Udine member)

- Barzilay Regina (MIT-CSAIL)

- Santus Enrico (MIT-CSAIL)

- Schuster Tal (MIT-CSAIL)

- Portelli Beatrice (AILAB-Udine member)

- Dominici Gabriele (AILAB-Udine member)

- Tasso Carlo (AILAB-Udine member)