EQAI – European Summer School on Quantum AI

AILAB-Udine is proud to be one of the organizers of the European Summer School on Quantum AI, (EQAI), which has been held yearly since 2022. Find the latest updated information on the official website:http://eqai.eu Or subscribe to the official google group to be notified of any major update!https://groups.google.com/g/eqai The summer school will take place in Lignano Sabbiadoro (Italy) on September 01 – 05, 2025.All the speakers will be there in person to make the experience more immersive and interactive! Find out more about the past editions at: https://eqai.eu/past-editions/ EQAI 2024 – Details The summer school will take place in Lignano Sabbiadoro (Italy) on September 02 – 06, 2024. EQAI 2023 – Details The summer school will take place in Udine (Italy) on May 29 – June 1, 2023, but it can also be followed remotely. The main topic of this edition is “Quantum Machine and Deep Learning”. Deadlines for application are: ▶ on-site: April 29, 2023, ▶ remote: May 15, […]

Quantum Machine Learning for the Noisy Intermediate-Scale Quantum Era

Quantum machine learning (QML) is an emerging field that combines the principles of quantum computing with classical machine learning techniques. It aims to exploit the unique properties of quantum systems, characterised by their ability to perform computations exponentially faster than classical devices, to improve the processing and analysis of complex data. This advance makes QML a very promising frontier. However, in the current Noisy Intermediate-Scale Quantum (NISQ) era, characterised by the presence of noise in quantum devices that limits their scalability, it is crucial to develop specific techniques to fully exploit quantum capabilities. This research focuses on the development and optimisation of QML models suitable for NISQ environments. A key aspect of our work is to adapt established optimisation methods from classical machine learning, such as batch normalisation and regularisation, for use in Quantum Neural Networks (QNNs). The project also explores the potential of Quanvolutional Neural Networks and Quantum Kernel methods. These efforts are aimed at improving the functionality and […]

Metaverse Understanding

In recent years, the Metaverse has sparked an increasing interest across the globe and is projected to reach a market size of more than $1000B by 2030. This is due to its many potential applications in highly heterogeneous fields, such as entertainment and multimedia consumption, training, and industry. This new technology raises many research challenges since, as opposed to the more traditional scene understanding, metaverse scenarios contain additional multimedia content, such as movies in virtual cinemas and operas in digital theaters, which greatly influence the relevance of the metaverse to a user query. For instance, if a user is looking for Impressionist exhibitions in a virtual museum, only the museums that showcase exhibitions featuring various Impressionist painters should be considered relevant. We introduce the novel problem of text-to-metaverse retrieval, to support the users in finding the most suitable metaverse according to a given textual query. It is a challenging task, since the multimedia content present in the metaverse greatly influences […]

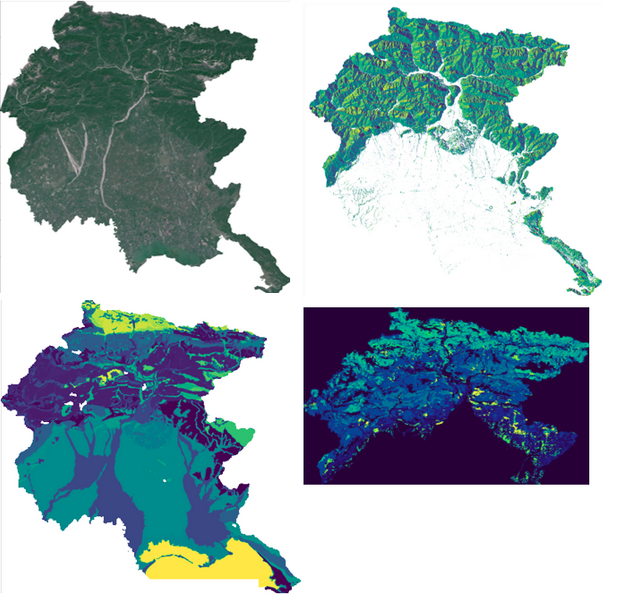

AI for Forestry Applications

This research project focuses on the application of machine and deep learning methods for forestry applications. In particular, the main focus is forest growing stock prediction in the Friuli Venezia Giulia region (Italy), but the developed methods can be applied to produce estimations of biophysical forest attributes on any large territory. This study will take into account different sources of data such as Forest inventory data from Nationla surveys, multispectral satellite images, climatic data and various environmental features collected through different services. Several methods will be applied to produce a forest-growing stock volume map, which will be useful to create management plans for forestry areas in the region. Traditionally, the growing stock is considered an important indicator of forest health and productivity. The growing stock is estimated through forest inventory under which both qualitative and quantitative parameters are recorded to know the overall health of growing forests. So, we will produce the results that can be considered as a basis […]