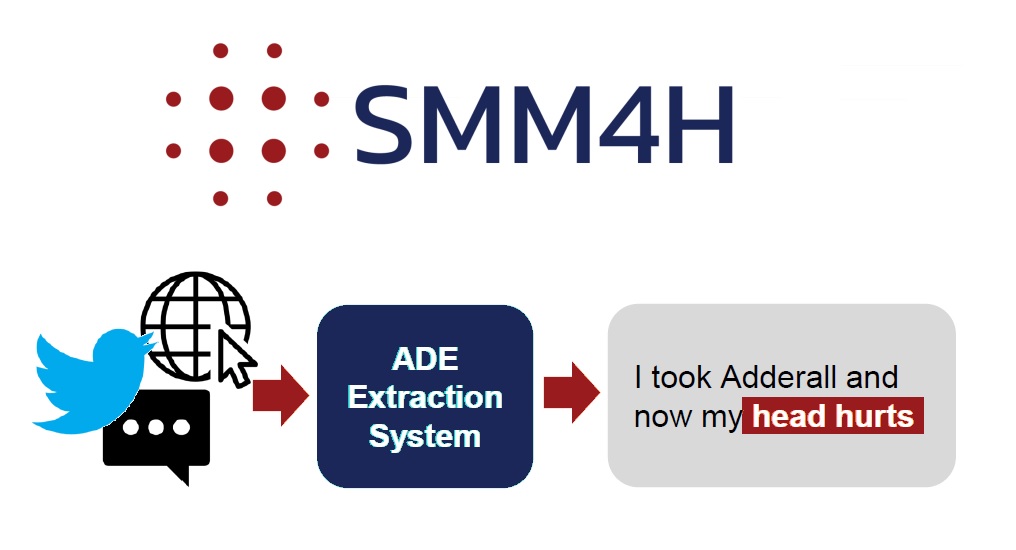

Pharmacovigilance monitors the drugs in the market to ensure that unexpected effects (Adverse Drug Events or ADEs) are immediately identified and actions are taken to minimize their harm. Patients have started reporting such ADEs on social media, health forums and similar outlets instead of using formal reporting methods. Given the need to monitor these sources for pharmacovigilance purposes, systems for the automatic extraction of ADE are becoming an important research topic in the NLP community and recent Shared Tasks on the topic of ADE extraction have attracted numerous focused contributions. Our research group has been working on an architecture for automatic ADE extraction from social media texts, with a focus on maintaining high performances on different text typologies (from short and noisy tweets to long and more formal medical forum posts). Our latest experiments lead us to reach the top of the leaderboard in one of the most relevant and active Shared Tasks in this field: SMM4H’19 (Social Media Mining […]